Table of Contents

Overview

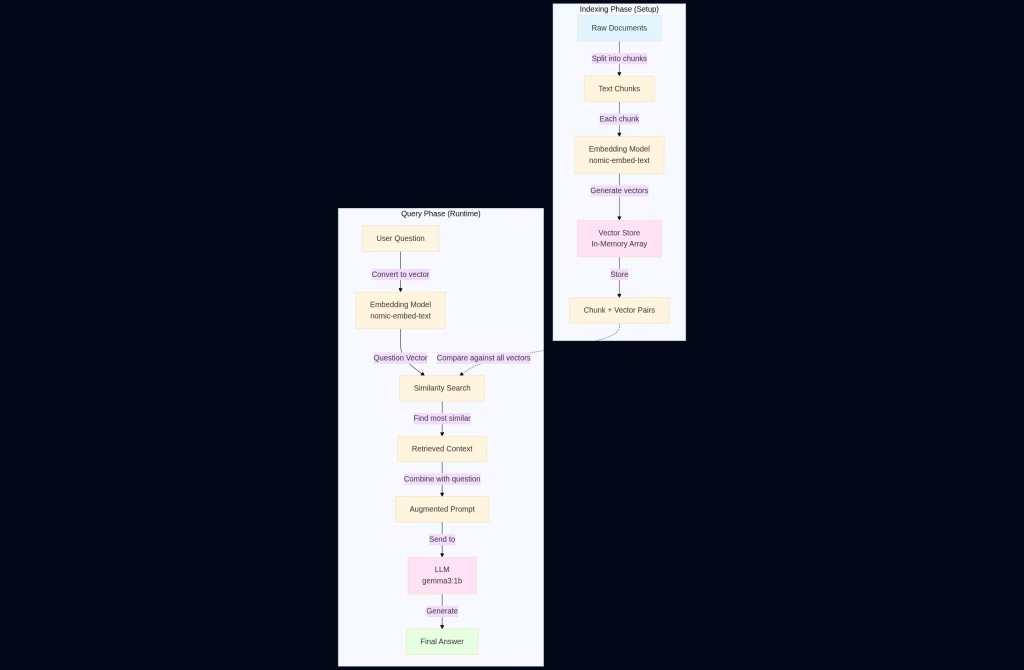

Retrieval-Augmented Generation (RAG) has become one of the most powerful techniques in modern AI applications. Instead of relying solely on an LLM’s training data, RAG allows models to access external knowledge by retrieving relevant information before generating responses.

In this tutorial, we’ll build a minimal RAG system from scratch in about 60 lines of Python. By the end, you’ll understand how RAG works under the hood and have a working example you can modify for your own projects.

What is RAG?

RAG combines two key components:

- Retrieval: Finding relevant information from a knowledge base

- Generation: Using an LLM to generate answers based on that retrieved information

Think of it as giving your AI a reference library. Instead of answering from memory alone, it can look up relevant passages first, leading to more accurate and grounded responses.

The general flow of a RAG application

Prerequisites

Before starting, you’ll need:

- Python 3.7+

- Ollama installed and running locally

- Two Ollama models downloaded:

nomic-embed-text(for embeddings)gemma3:1b(for chat)

Install the models with:

ollama pull nomic-embed-text ollama pull gemma3:1b

You’ll also need these Python packages:

pip install requests numpy

The Complete Code

Here’s our complete RAG implementation:

import requests

import numpy as np

OLLAMA_URL = "http://localhost:11434/api"

EMBED_MODEL = "nomic-embed-text"

CHAT_MODEL = "gemma3:1b"

full_text = """

The Apollo 11 mission launched on July 16, 1969.

Neil Armstrong was the first person to walk on the moon.

Buzz Aldrin joined him 19 minutes later.

Michael Collins stayed in orbit in the command module.

Michael Jackson also stayed at home, in his ship too.

The total duration of the lunar surface EVA was 2 hours and 31 minutes.

"""

chunks = [chunk.strip() for chunk in full_text.split('.') if chunk.strip()]

def get_embedding(text):

response = requests.post(

f"{OLLAMA_URL}/embeddings",

json={"model": EMBED_MODEL, "prompt": text}

)

eb = response.json()["embedding"]

print(f"Embedding done")

return eb

print("Building vector...")

vector_store = []

for chunk in chunks:

vector = get_embedding(chunk)

vector_store.append({

"text": chunk,

"vector": np.array(vector)

})

def retrieve(question):

question_vector = np.array(get_embedding(question))

best_chunk = None

best_score = -1

for item in vector_store:

score = np.dot(item["vector"], question_vector) / (

np.linalg.norm(item["vector"]) * np.linalg.norm(question_vector)

)

if score > best_score:

best_score = score

best_chunk = item["text"]

return best_chunk

user_query = "Who stayed in the ship that was docked in the orbit?"

print(f"\n User: {user_query}")

context = retrieve(user_query)

print(f"\n Retrieved context: {context}")

prompt = f"""

Use this context to answer the question.

Context: {context}

Question: {user_query}

"""

response = requests.post(

f"{OLLAMA_URL}/chat",

json={

"model": CHAT_MODEL,

"stream": False,

"messages": [

{"role": "user", "content": prompt}

]

}

)

print("Answer:", response.json()["message"]["content"])

Breaking Down the Code

Step 1: Document Chunking

chunks = [chunk.strip() for chunk in full_text.split('.') if chunk.strip()]

We split our knowledge base into smaller pieces. In this simple example, we split by periods, but production systems typically use more sophisticated chunking strategies based on semantic boundaries, token limits, or sliding windows.

Step 2: Creating Embeddings

def get_embedding(text):

response = requests.post(

f"{OLLAMA_URL}/embeddings",

json={"model": EMBED_MODEL, "prompt": text}

)

return response.json()["embedding"]

Embeddings are numerical representations of text that capture semantic meaning. Similar texts have similar embeddings. The nomic-embed-text model converts each chunk into a high-dimensional vector.

Step 3: Building the Vector Store

vector_store = []

for chunk in chunks:

vector = get_embedding(chunk)

vector_store.append({

"text": chunk,

"vector": np.array(vector)

})

We create our knowledge base by storing each chunk alongside its embedding vector. In production, you’d use specialized vector databases like Pinecone, Weaviate, or ChromaDB, but a simple list works for learning.

Step 4: Semantic Retrieval

def retrieve(question):

question_vector = np.array(get_embedding(question))

best_chunk = None

best_score = -1

for item in vector_store:

score = np.dot(item["vector"], question_vector) / (

np.linalg.norm(item["vector"]) * np.linalg.norm(question_vector)

)

if score > best_score:

best_score = score

best_chunk = item["text"]

return best_chunk

This is where the magic happens. We:

- Convert the user’s question into an embedding

- Calculate cosine similarity between the question and each chunk

- Return the most similar chunk

Cosine similarity measures the angle between vectors, giving us a score between -1 and 1. Higher scores mean more similar content.

Step 5: Augmented Generation

prompt = f"""

Use this context to answer the question.

Context: {context}

Question: {user_query}

"""

response = requests.post(

f"{OLLAMA_URL}/chat",

json={

"model": CHAT_MODEL,

"stream": False,

"messages": [{"role": "user", "content": prompt}]

}

)

Finally, we provide both the retrieved context and the question to the LLM. This grounds the model’s response in actual retrieved information rather than relying on potentially outdated or incorrect training data.

Running the Example

When you run this code, you’ll see output like:

Building vector... Embedding done Embedding done ... User: Who stayed in the ship that was docked in the orbit? Retrieved context: Michael Collins stayed in orbit in the command module Answer: Michael Collins stayed in the ship docked in orbit.

Notice how the system correctly identifies Michael Collins, even though the text also mentions Michael Jackson staying “in his ship” (a humorous distractor). The semantic search finds the most relevant context.

Why This Matters

Without RAG, the LLM would have to answer from its training data alone. With RAG:

- Accuracy: Answers are grounded in your specific documents

- Currency: You can update knowledge without retraining

- Transparency: You can see which sources informed the answer

- Reduced hallucination: The model works from real retrieved text

Limitations and Next Steps

This hello-world example simplifies many aspects of production RAG systems:

What’s Missing:

- Error handling

- Chunk size optimization

- Top-k retrieval (returning multiple chunks)

- Reranking retrieved results

- Persistent vector storage

- Metadata filtering

- Query expansion and rewriting

Production Improvements:

- Use vector databases (Pinecone, Weaviate, ChromaDB)

- Implement hybrid search (combining semantic and keyword search)

- Add citation tracking

- Use more sophisticated chunking strategies

- Implement caching for embeddings

- Add evaluation metrics

Conclusion

RAG doesn’t have to be complicated. This 60-line example demonstrates the core concept: convert text to embeddings, find similar content, and use it to augment generation. From here, you can expand to handle larger documents, more complex queries, and production-scale deployments.

The beauty of RAG is that it makes AI systems more reliable and maintainable. Instead of hoping your model memorized the right information during training, you explicitly provide it with relevant context for each query.

I build softwares that solve problems. I also love writing/documenting things I learn/want to learn.